Robotic News

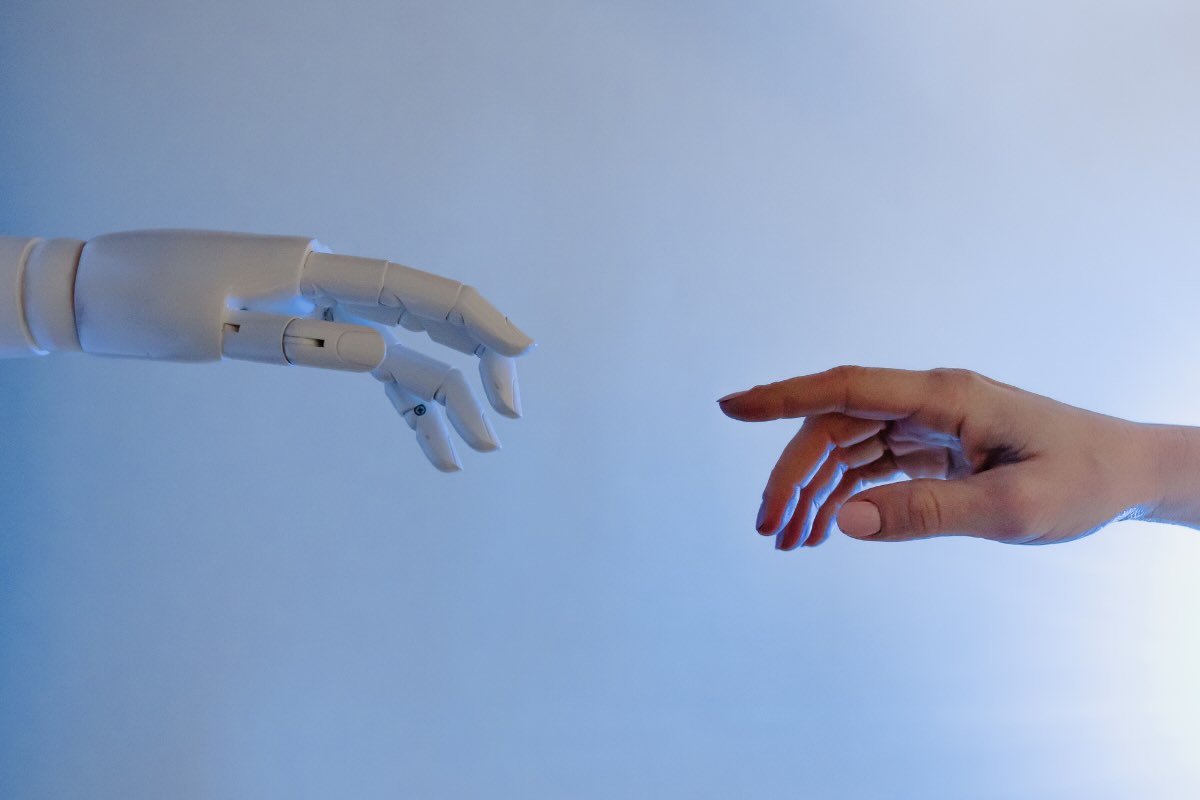

Buone notizie per chi cerca disperatamente l’amore: fra qualche anno sarà possibile sposare un robot

Sono tante le persone che non riescono a trovare l’amore ma che non vorrebbero rimanere da sole. Le nuove frontiere …

Scienze & Tecnologia

Usiamo tutti i giorni PC, tablet e smartphone e quanto si sporcano! Ecco come pulire i nostri dispositivi in modo sicuro

Usiamo i nostri dispositivi elettronici tutti i giorni: PC, tablet, smartphone, ma non facciamo attenzione alla loro pulizia. Ecco come …

Curiosità

Non buttare lo spazzolino da denti: 10 modi per riciclarlo davvero utilissimi

Hai uno spazzolino vecchio e stai per buttarlo via? Non farlo: ci sono almeno 10 …